Cloud-side JavaScript "Promise" handling update

Make sure to use "catch" method on promises!

Make sure to use "catch" method on promises!

New Features in Voximplant Kit: Update overview We are constantly working to improve our product to make it easier to use and more effective for you. In this update, we have added several useful features. Here’s what’s new:

Voximplant has added Secrets, a dedicated credential store for API keys, tokens, and other sensitive values that VoxEngine scenarios need at runtime

Voximplant now lets developers build full-cascade voice AI pipelines in VoxEngine without sacrificing turn-taking quality.

Learn how a Voice AI Orchestration Platform connects LLMs, STT/TTS, turn‑taking, and telephony (PSTN, SIP, WebRTC) to build reliable real‑time voice agents. See benefits, architecture, and how Voximplant helps.

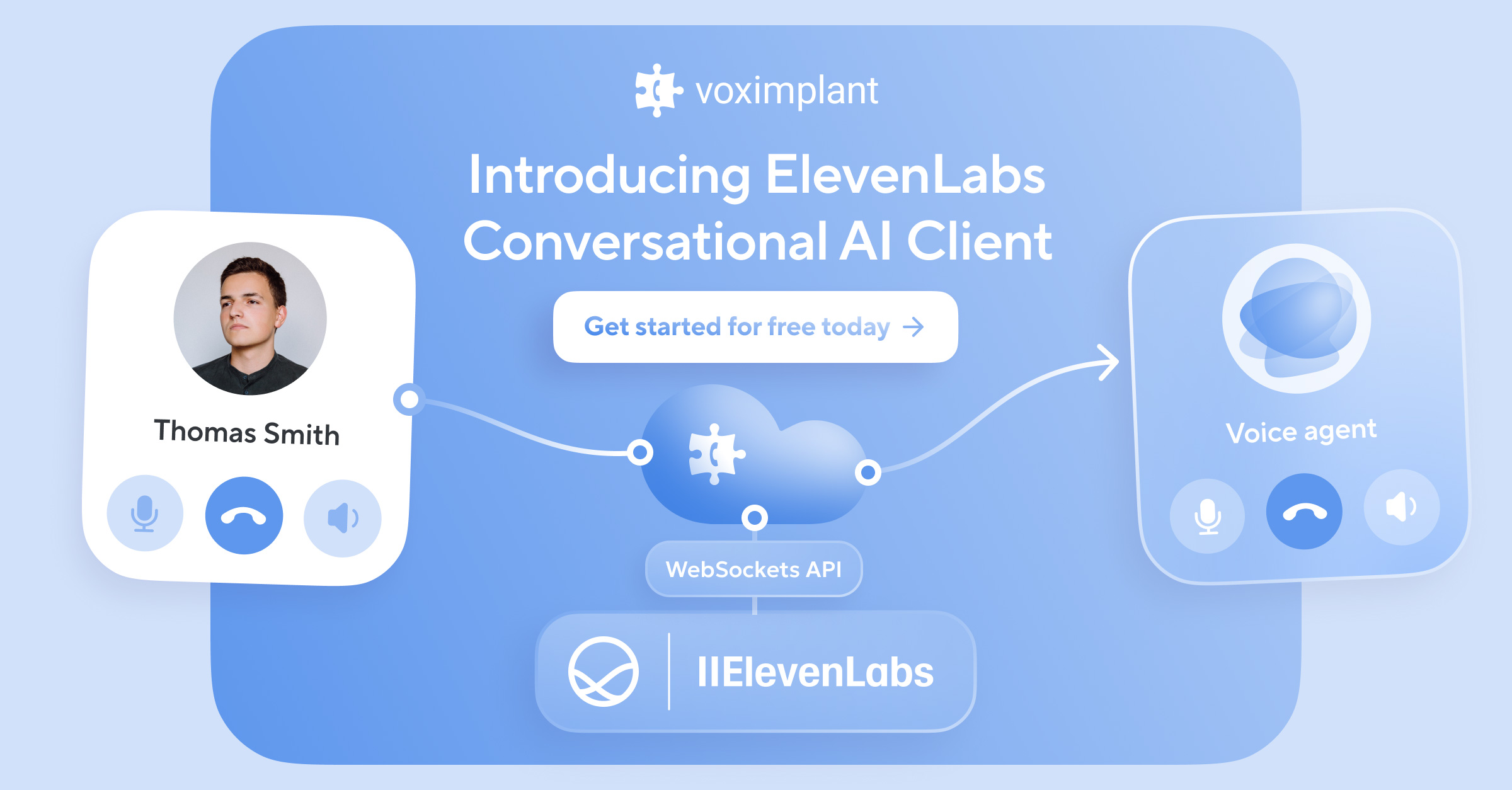

Connect any Voximplant call to ElevenLabs Conversational AI agents

Voximplant now includes a native Grok module that connects any Voximplant call to xAI’s Grok Voice Agent API for real-time, speech-to-speech conversations. With a single VoxEngine scenario, you can interact via audio with Grok over phone numbers, SIP trunks and infrastructure, WhatsApp Business, or WebRTC into Grok — all without building custom media gateways or WebSocket streaming infrastructure.

Today Ultravox announced they are directly integrating Voximplant into their platform to provide SIP capabilities. The integration builds on Voximplant’s deep telephony and Voice AI tooling

Voximplant has added a WebSocket privacy option that redacts message payloads from logs across all WebSocket-based services – Voice AI connectors and external speech system – and speech control modules